The All-Seeing "i": Apple Just Declared War on Your Privacy

“Under His Eye,” she says. The right farewell. “Under His Eye,” I reply, and she gives a little nod.

By now you've probably heard that Apple plans to push a new and uniquely intrusive surveillance system out to many of the more than one billion iPhones it has sold, which all run the behemoth's proprietary, take-it-or-leave-it software. This new offensive is tentatively slated to begin with the launch of iOS 15—almost certainly in mid-September—with the devices of its US user-base designated as the initial targets. We’re told that other countries will be spared, but not for long.

You might have noticed that I haven’t mentioned which problem it is that Apple is purporting to solve. Why? Because it doesn’t matter.

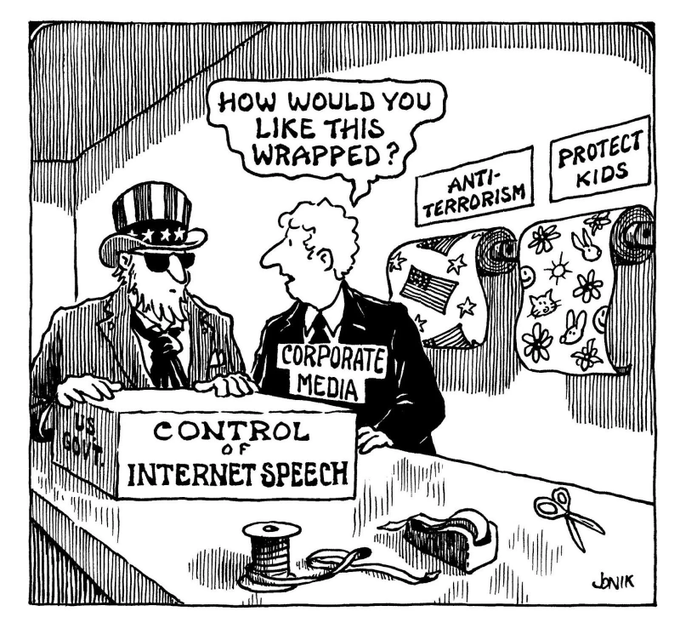

Having read thousands upon thousands of remarks on this growing scandal, it has become clear to me that many understand it doesn't matter, but few if any have been willing to actually say it. Speaking candidly, if that’s still allowed, that’s the way it always goes when someone of institutional significance launches a campaign to defend an indefensible intrusion into our private spaces. They make a mad dash to the supposed high ground, from which they speak in low, solemn tones about their moral mission before fervently invoking the dread spectre of the Four Horsemen of the Infopocalypse, warning that only a dubious amulet—or suspicious software update—can save us from the most threatening members of our species.

Suddenly, everybody with a principled objection is forced to preface their concern with apologetic throat-clearing and the establishment of bonafides: I lost a friend when the towers came down, however... As a parent, I understand this is a real problem, but...

As a parent, I’m here to tell you that sometimes it doesn’t matter why the man in the handsome suit is doing something. What matters are the consequences.

Apple’s new system, regardless of how anyone tries to justify it, will permanently redefine what belongs to you, and what belongs to them.

How?

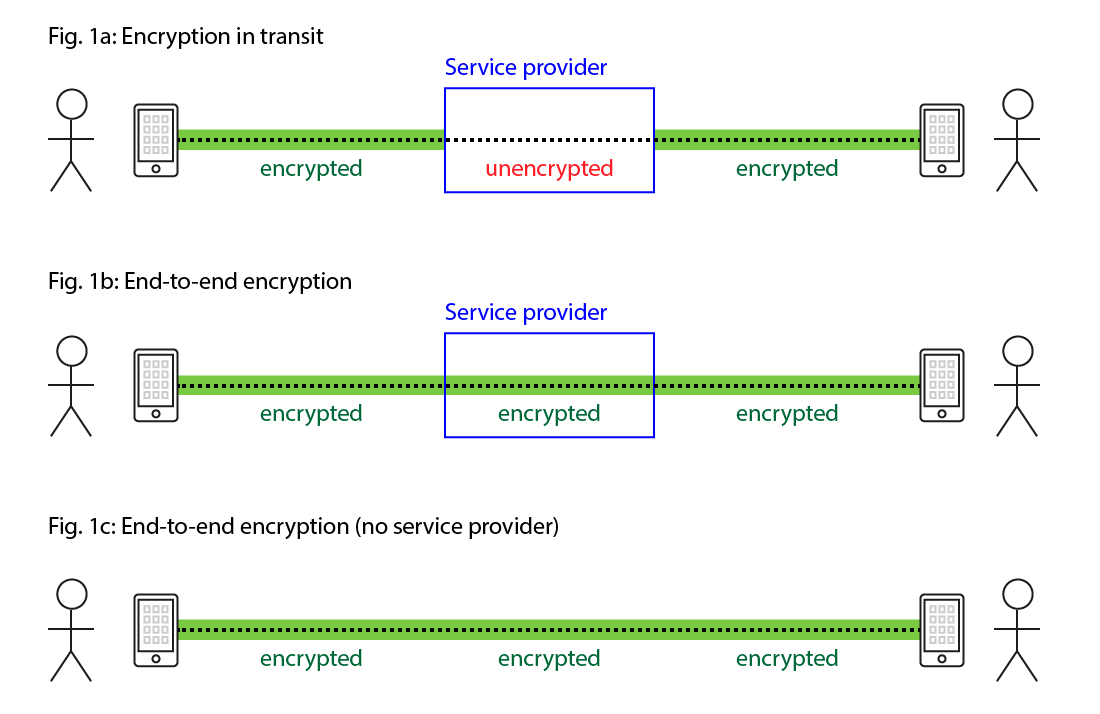

The task Apple intends its new surveillance system to perform—preventing their cloud systems from being used to store digital contraband, in this case unlawful images uploaded by their customers—is traditionally performed by searching their systems. While it’s still problematic for anybody to search through a billion people’s private files, the fact that they can only see the files you gave them is a crucial limitation.

Now, however, that’s all set to change. Under the new design, your phone will now perform these searches on Apple’s behalf before your photos have even reached their iCloud servers, and—yada, yada, yada—if enough "forbidden content" is discovered, law-enforcement will be notified.

I intentionally wave away the technical and procedural details of Apple’s system here, some of which are quite clever, because they, like our man in the handsome suit, merely distract from the most pressing fact—the fact that, in just a few weeks, Apple plans to erase the boundary dividing which devices work for you, and which devices work for them.

Why is this so important? Once the precedent has been set that it is fit and proper for even a "pro-privacy" company like Apple to make products that betray their users and owners, Apple itself will lose all control over how that precedent is applied. As soon as the public first came to learn of the “spyPhone” plan, experts began investigating its technical weaknesses, and the many ways it could be abused, primarily within the parameters of Apple’s design. Although these valiant vulnerability-research efforts have produced compelling evidence that the system is seriously flawed, they also seriously miss the point: Apple gets to decide whether or not their phones will monitor their owners’ infractions for the government, but it's the government that gets to decide what constitutes an infraction... and how to handle it.

For its part, Apple says their system, in its initial, v1.0 design, has a narrow focus: it only scrutinizes photos intended to be uploaded to iCloud (although for 85% of its customers, that means EVERY photo), and it does not scrutinize them beyond a simple comparison against a database of specific examples of previously-identified child sexual abuse material (CSAM).

If you’re an enterprising pedophile with a basement full of CSAM-tainted iPhones, Apple welcomes you to entirely exempt yourself from these scans by simply flipping the “Disable iCloud Photos” switch, a bypass which reveals that this system was never designed to protect children, as they would have you believe, but rather to protect their brand. As long as you keep that material off their servers, and so keep Apple out of the headlines, Apple doesn’t care.

So what happens when, in a few years at the latest, a politician points that out, and—in order to protect the children—bills are passed in the legislature to prohibit this "Disable" bypass, effectively compelling Apple to scan photos that aren’t backed up to iCloud? What happens when a party in India demands they start scanning for memes associated with a separatist movement? What happens when the UK demands they scan for a library of terrorist imagery? How long do we have left before the iPhone in your pocket begins quietly filing reports about encountering “extremist” political material, or about your presence at a "civil disturbance"? Or simply about your iPhone's possession of a video clip that contains, or maybe-or-maybe-not contains, a blurry image of a passer-by who resembles, according to an algorithm, "a person of interest"?